Under Development

Under Development

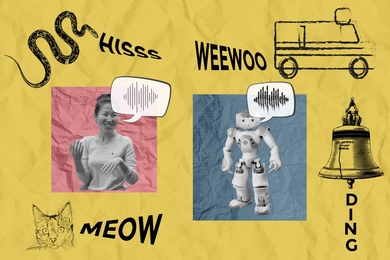

Whether you’re describing the sound of your faulty car engine or meowing like your neighbor’s cat, imitating sounds with your voice can be a helpful way to relay a concept when words don’t do the trick.

Vocal imitation is the sonic equivalent of doodling a quick picture to communicate something you saw — except that instead of using a pencil to illustrate an image, you use your vocal tract to express a sound. This might seem difficult, but it’s something we all do intuitively: To experience it for yourself, try using your voice to mirror the sound of an ambulance siren, a crow, or a bell being struck.

Inspired by the cognitive science of how we communicate, MIT Computer Science and Artificial Intelligence Laboratory (CSAIL) researchers have developed an AI system that can produce human-like vocal imitations with no training, and without ever having "heard" a human vocal impression before.

To achieve this, the researchers engineered their system to produce and interpret sounds much like we do. They started by building a model of the human vocal tract that simulates how vibrations from the voice box are shaped by the throat, tongue, and lips. Then, they used a cognitively-inspired AI algorithm to control this vocal tract model and make it produce imitations, taking into consideration the context-specific ways that humans choose to communicate sound.

The model can effectively take many sounds from the world and generate a human-like imitation of them — including noises like leaves rustling, a snake’s hiss, and an approaching ambulance siren. Their model can also be run in reverse to guess real-world sounds from human vocal imitations, similar to how some computer vision systems can retrieve high-quality images based on sketches. For instance, the model can correctly distinguish the sound of a human imitating a cat’s “meow” versus its “hiss.”

In the future, this model could potentially lead to more intuitive “imitation-based” interfaces for sound designers, more human-like AI characters in virtual reality, and even methods to help students learn new languages.

The co-lead authors — MIT CSAIL PhD students Kartik Chandra SM ’23 and Karima Ma, and undergraduate researcher Matthew Caren — note that computer graphics researchers have long recognized that realism is rarely the ultimate goal of visual expression. For example, an abstract painting or a child’s crayon doodle can be just as expressive as a photograph.

“Over the past few decades, advances in sketching algorithms have led to new tools for artists, advances in AI and computer vision, and even a deeper understanding of human cognition,” notes Chandra. “In the same way that a sketch is an abstract, non-photorealistic representation of an image, our method captures the abstract, non-phono-realistic ways humans express the sounds they hear. This teaches us about the process of auditory abstraction.”

Here’s How It Works:

1. Refer a Friend: Share the name and contact details of anyone who might benefit from our innovative web solutions.

2. They Sign a Deal: When your referral becomes a client and completes a $5,000+ contract with us, you get rewarded.

3. Get Paid: Receive $1,000 in cash as a thank you for your referral after final payment has been received from the referred client!

It’s that simple. Help your friends get the best in web design and development, and earn big while doing it. Start referring today and watch your rewards grow!

*Subject to IRS income tax rules and regulations

©2013-2025 | All rights reserved.